The U.S. Department of Health and Human Services (HHS) is engineering a sophisticated Large Language Model (LLM) to extract injury hypotheses from federal vaccine data, a development that coincides with a radical overhaul of immunization policies under Secretary Robert F. Kennedy Jr. Internal documents reveal the tool has been in development since late 2023, though it has not yet been deployed for official use. While the technology aims to streamline data analysis, public health experts express profound concern that the generated predictions could be weaponized to advance an anti-vaccine agenda and undermine confidence in established medical protocols.

A Shift Toward Generative AI in Public Health Surveillance

The transition to advanced AI models represents an evolution from traditional natural language processing techniques previously used by government scientists to identify patterns in vaccine data. Leslie Lenert, Director of the Center for Biomedical Informatics and Health Artificial Intelligence at Rutgers University and former CDC official, notes that while the adoption of LLMs is a logical technical step, the exploratory nature of these models requires rigorous human oversight. LLMs are prone to “hallucinations”—generating convincing but false information—which necessitates a high level of expertise to distinguish between legitimate safety signals and statistical noise.

The Inherent Noise of the VAERS Database

The primary data source for this new AI tool is the Vaccine Adverse Event Reporting System (VAERS), a database jointly managed by the CDC and the FDA since 1990. Designed as an early-warning system, VAERS allows anyone—including healthcare providers and the general public—to submit reports of health events following vaccination. However, these reports are unverified and lack a control group, making it impossible to determine causation based on VAERS data alone. Paul Offit, a pediatrician and Director of the Vaccine Education Center at Children’s Hospital of Philadelphia, emphasizes that VAERS is a “noisy system” meant only for hypothesis generation, not as proof of vaccine-related injury.

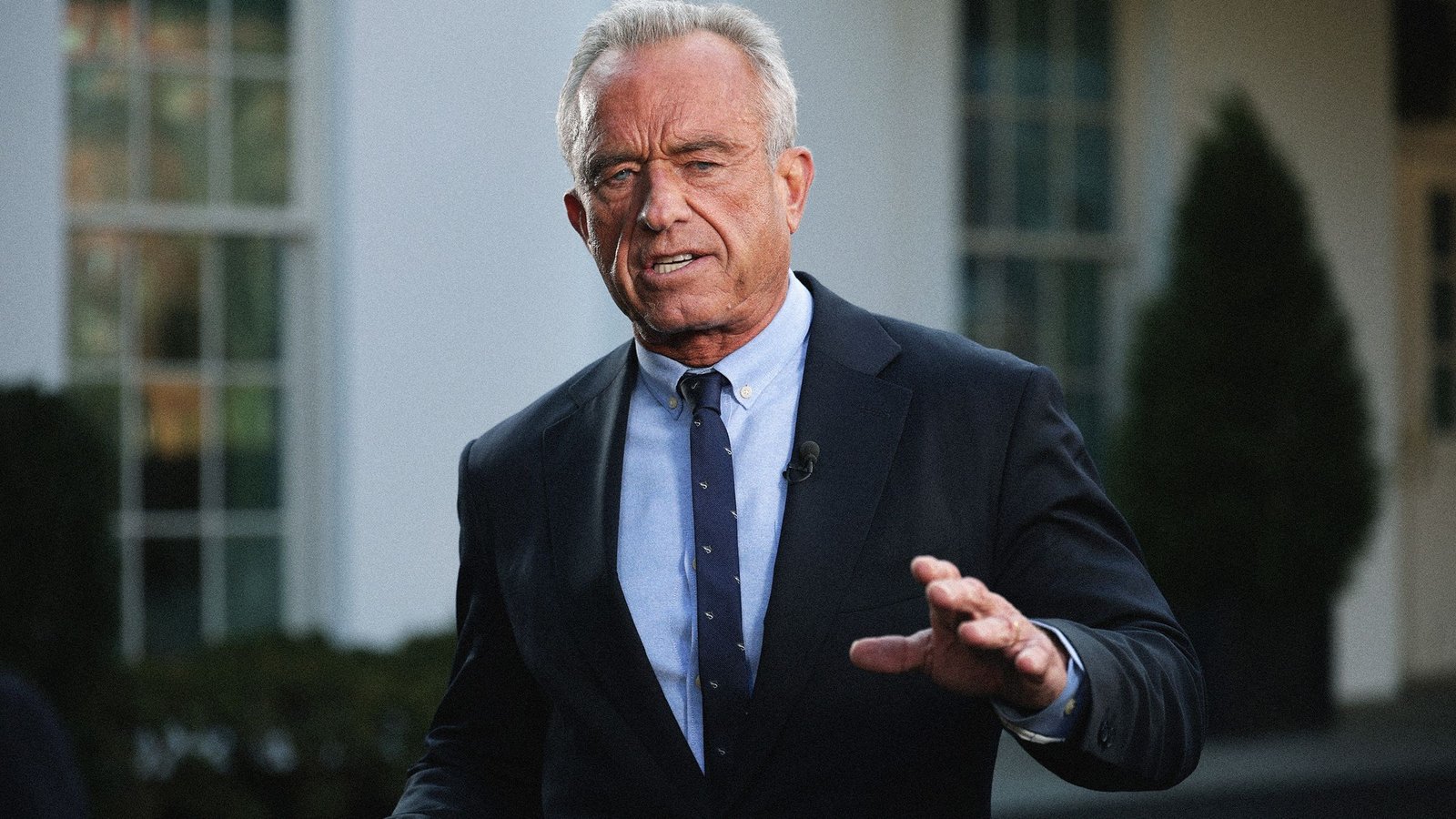

Policy Overhauls and the Removal of Recommended Immunizations

The development of this AI tool occurs against a backdrop of significant shifts in federal health policy. Secretary Robert F. Kennedy Jr. has already moved to dismantle established childhood vaccination schedules, removing several key immunizations from the list of recommended shots. This includes vaccines for Covid-19, influenza, hepatitis A and B, rotavirus, respiratory syncytial virus (RSV), and meningococcal disease. Kennedy has frequently criticized the current safety monitoring infrastructure, alleging that VAERS suppresses the true extent of vaccine side effects, while proposing changes to the Vaccine Injury Compensation Program that would lower the threshold for litigation against manufacturers.

Institutional Conflict Over Evidence-Based Regulation

Tensions within federal agencies have escalated following a memo from Vinay Prasad, Director of the FDA’s Center for Biologics Evaluation and Research, who proposed stricter vaccine regulations. Prasad cited the deaths of at least 10 children linked to the Covid-19 vaccine without providing supporting evidence, despite these cases having been previously reviewed and dismissed by FDA staff. In a rare public rebuke, more than a dozen former FDA commissioners published a letter in The New England Journal of Medicine, warning that such policy shifts threaten to “dramatically change vaccine regulation on the basis of a reinterpretation of selective evidence.”

The Challenge of False Alerts and Staffing Shortages

While AI has the potential to detect previously unknown safety issues—as VAERS did with rare clotting disorders in the Johnson & Johnson vaccine and myocarditis in mRNA shots—experts warn of a high rate of false alerts. Jesse Goodman, a professor at Georgetown University and former FDA official, argues that the output from an LLM requires a massive capacity for skilled human follow-through. With the CDC facing deep staffing cuts, the ability to accurately screen, study, and validate emerging data remains in question. As of the time of publication, HHS has not responded to requests for comment regarding the deployment timeline or the specific parameters of the AI tool.